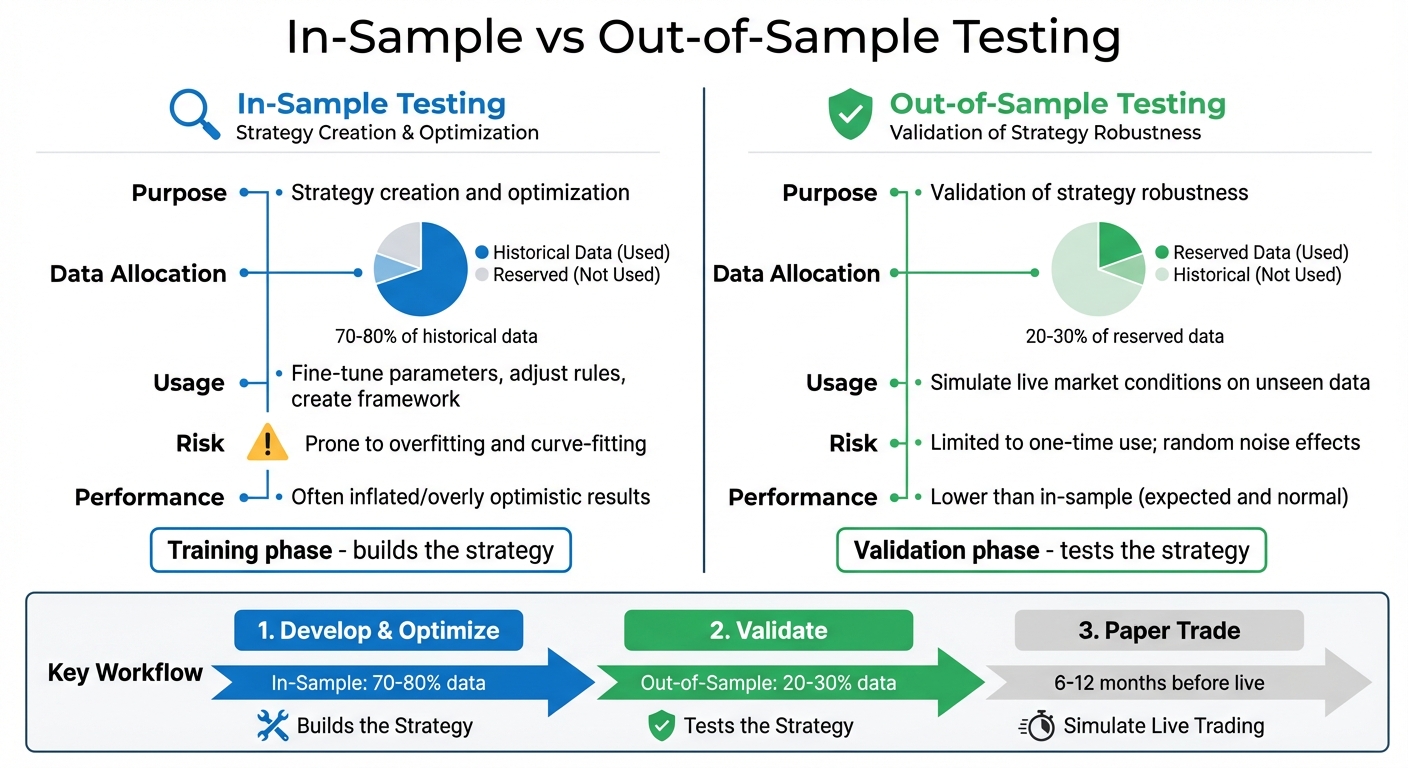

How to use in-sample and out-of-sample testing to build and validate trading strategies, avoid overfitting, and apply proper data splits and validation.

When developing trading strategies, in-sample testing and out-of-sample testing are two critical steps to improve reliability. Here’s the key takeaway: in-sample testing is used to build and refine strategies using historical data, while out-of-sample testing validates those strategies on new, unseen data to better simulate live market conditions. This process helps minimize the risk of overfitting - when a strategy performs well on past data but breaks down in future markets. For traders evaluating ideas more systematically, LuxAlgo’s AI Backtesting Assistant can help surface and compare strategy concepts before capital is put at risk.

Key Points

-

In-Sample Testing:

- Purpose: Fine-tune and optimize strategies.

- Data: Often uses 70–80% of historical data, ideally across multiple market regimes.

- Risk: Prone to overfitting, so results can look better than they would in live trading.

-

Out-of-Sample Testing:

- Purpose: Validate strategy robustness.

- Data: Commonly uses the remaining 20–30% of untouched historical data.

- Risk: Limited to one meaningful validation pass; weak results often expose overfitting.

Quick Comparison

| Criterion | In-Sample Testing | Out-of-Sample Testing |

|---|---|---|

| Purpose | Strategy creation and optimization | Validation of strategy robustness |

| Data Allocation | 70–80% of historical data | 20–30% reserved for validation |

| Risk | Overfitting due to repeated tweaking | Limited reuse; random noise can still distort results |

In-Sample vs Out-of-Sample Testing: Key Differences for Trading Strategies

1. In-Sample Testing

Purpose

In-sample testing serves as the foundation for developing and refining your trading strategy. This is where you fine-tune parameters, adjust rules, and create the framework for your model. As David Bergstrom from Build Alpha explains:

"The in-sample data is the portion of data used to develop the initial strategy, run backtests, optimize parameters, make tweaks, add filters, delete rules, etc." [3]

The main objective here is to establish a benchmark for performance. If your strategy struggles during this phase, it’s unlikely to perform well on new, unseen data. In-sample testing also helps uncover major flaws in the logic of your strategy before moving forward. If you want to translate those rules into Pine Script for TradingView, LuxAlgo Quant is especially relevant because it is purpose-built for generating, validating, and debugging TradingView indicators and strategies.

Data Usage

Traders typically allocate 70% of historical data for in-sample testing, leaving the remaining 30% for out-of-sample validation [3]. Other common splits include 80/20 or 67/33 [2][1]. The critical factor is ensuring the in-sample period covers a complete market cycle, including both bull and bear markets, so the model is exposed to more than one environment.

Some experts suggest using the most recent data for in-sample testing instead of older data. This can help align your strategy with more current market structure, volatility behavior, and execution realities [1][3]. Regardless of the approach, professional-grade backtesting often uses 5 to 20 years of historical data to account for different market conditions [2]. Traders using structured research workflows can also compare rules inside LuxAlgo’s AI Backtesting Assistant documentation to understand how broader validation and strategy exploration fit into a modern testing pipeline.

Risk Exposure

One of the biggest pitfalls of in-sample testing is overfitting, also known as curve-fitting. This happens when your strategy becomes too tailored to the historical data, capturing noise rather than genuine market signals. Consider this: after testing just 7 different strategy configurations, there’s a statistical likelihood of finding at least one 2-year backtest with an annualized Sharpe ratio above 1 - even if its true out-of-sample performance is zero [6].

To reduce that risk, incorporate parameter sensitivity testing during this stage. Small adjustments to input values should not cause your strategy’s performance to collapse [1]. And once a strategy fails in out-of-sample testing, do not return to the in-sample data to keep tweaking it. Doing so effectively turns your validation data into training data, which defeats the purpose of the split [2][1]. If your process involves custom scripts, Quant’s documentation is useful for understanding how an AI coding agent specialized in Pine Script can help validate implementation details before broader testing.

Performance Expectations

In-sample testing often produces inflated performance results because the strategy has been optimized specifically for that dataset. As The Robust Trader points out:

"In-sample evaluation is subject to overfitting, while out-of-sample evaluation is a more reliable indicator of a model's true performance." [2]

Think of in-sample results as your strategy’s ideal scenario. The model has been tailored to maximize performance during this specific historical period, potentially "memorizing" patterns that may not reoccur. That’s why further validation through out-of-sample testing is essential to gauge real-world reliability.

2. Out-of-Sample Testing

Purpose

Out-of-sample testing plays a critical role in checking whether your trading strategy can handle real-world market dynamics without simply memorizing past data. As David Bergstrom from Build Alpha explains:

"Out of sample testing is a research effort and first line of defense to discover overfit and curve fit trading strategies that are destined to fail in live trading." [3]

This phase works as a simulation of live trading conditions, helping you eliminate strategies that are unlikely to perform well in actual markets. It protects capital by filtering out unreliable systems before real money is on the line [3]. In practice, this is also where traders can compare whether a strategy idea found through LuxAlgo’s AI Backtesting Assistant holds up under stricter validation standards.

Data Usage

After setting aside data for in-sample testing, reserve 20–30% of your historical dataset for out-of-sample validation. This portion of the data should remain untouched until the final testing phase. If your strategy fails during out-of-sample testing, resist the urge to retest on the same data. Doing so turns your validation data into training data, which undermines the integrity of the process [2]. For an added layer of confidence, many professionals recommend an incubation period - testing the strategy in a live demo environment for 6 to 12 months before risking actual capital [7].

Risk Exposure

It’s important not to tweak your model based on weak out-of-sample results, as this can lead to data snooping. In addition, testing across a variety of market conditions helps reduce regime bias. For example, if your out-of-sample period happens during a strong bull market and your strategy has a long bias, it may appear successful purely by chance rather than true robustness [3]. To address this, choose demanding out-of-sample periods that include different market conditions. If your in-sample data was mostly bullish, consider validating during a bear market or range-bound period as well [3].

Performance Expectations

A strong trading strategy should perform reasonably consistently across both in-sample and out-of-sample periods. As The Robust Trader notes:

"The main premise of out of sample testing is that true market behavior will be consistent throughout both data sets, while random market noise will not." [2]

It’s normal to see some performance decline out-of-sample since the strategy was not optimized for that data. However, a major drop-off is a warning sign. For example, if a strategy’s profit per trade falls from $200 in-sample to a $100 loss out-of-sample, that usually points to overfitting [3]. These insights naturally lead into the next topic: the practical differences between in-sample and out-of-sample testing.

In-Sample vs. Out-of-Sample Analysis of Trading Strategies: Quantpedia Explains

Main Differences Between In-Sample and Out-of-Sample Testing

Understanding the differences between in-sample and out-of-sample testing is key to developing dependable trading strategies. While both rely on historical data, they play distinct roles in the strategy development process. These roles directly affect how you evaluate robustness, avoid bias, and decide whether a strategy deserves further testing.

Here’s a quick comparison of the two:

| Criterion | In-Sample (IS) Testing | Out-of-Sample (OOS) Testing |

|---|---|---|

| Purpose | Focuses on creating strategies, optimizing parameters, and refining rules to achieve the best historical performance [2][3] | Validates a strategy’s robustness and estimates how it might perform in live, unseen market conditions [2][3] |

| Data Allocation | Uses the initial "training" segment of historical data for model fitting and optimization [8][5] | Relies on a reserved "clean" dataset that remains untouched during the optimization phase [8] |

| Risk Exposure | Prone to overfitting, data dredging, and curve-fitting due to repeated parameter adjustments [4][6] | Can be skewed by random outcomes or data snooping if repeatedly tested [1] |

| Performance Expectations | Often yields impressive but unrealistic results since parameters are fine-tuned for the same dataset [5] | Typically delivers lower performance compared to in-sample results, which is expected [1] |

Studies show that after testing just seven strategy configurations, a trader may find a 2-year backtest with an annualized Sharpe ratio above 1 even if the actual out-of-sample Sharpe ratio is zero [6]. This highlights why the distinction matters so much - in-sample results can create false confidence.

When in-sample and out-of-sample performance stay relatively close, it’s a healthier sign that the strategy may have potential. Large gaps between the two usually point to overfitting and should trigger caution before moving toward live trading, paper trading, or deployment.

Pros and Cons

When evaluating in-sample and out-of-sample testing methods, it’s important to weigh their strengths and weaknesses. Both approaches are essential to strategy development and validation, but each brings different trade-offs.

In-sample testing is especially useful during early strategy development. It gives you a controlled environment to test ideas and study historical behavior. It also allows you to tune strategies for specific market conditions, such as high volatility or strong trends. The downside is the risk of curve-fitting - where the strategy starts to "memorize" random noise instead of identifying durable patterns. That’s why great in-sample performance alone is never enough.

Out-of-sample testing works as a safeguard against unreliable strategies. By approximating live market conditions, it offers a less biased view of how a strategy may perform in the future. But this approach has limitations too. Once you have consumed out-of-sample data for validation, it loses much of its value as a clean test set. Splitting data also reduces the amount available for training, which can be a challenge when working with newer markets or assets with limited history.

Here’s a quick comparison of the two methods:

| Testing Method | Main Advantages | Main Disadvantages |

|---|---|---|

| In-Sample | Provides a foundation for strategy development; allows controlled hypothesis testing; optimizes for specific market conditions. | Prone to overfitting and data dredging; may produce overly optimistic performance metrics. |

| Out-of-Sample | Filters out overfit strategies; offers a more objective performance evaluation; better approximates real trading conditions. | Limited to one meaningful use; reduces training data; may still reflect random variation. |

The key takeaway is simple: in-sample testing results are only the starting point. They must be validated before a strategy can be treated seriously. As Marcos López de Prado, a well-known quantitative researcher, puts it:

"The purpose of a backtest is to discard bad models, not to improve them."

Use in-sample testing to shape the strategy, but use out-of-sample testing to decide whether it deserves further research. If that strategy development process involves custom scripting, Quant can shorten the path from idea to deployable Pine Script by helping traders generate and refine TradingView strategies more efficiently.

Conclusion

In-sample and out-of-sample testing work best as a pair. In-sample testing helps you develop and improve a strategy, while out-of-sample testing checks whether it still makes sense when exposed to unseen data.

One of the most common mistakes traders make is treating strong in-sample results as proof that a strategy is reliable. The risk of overfitting is always present, and that is exactly why out-of-sample testing matters. It acts as an early warning system, helping you discard fragile strategies before they fail in live markets.

To preserve the integrity of your process, avoid re-optimizing strategies after out-of-sample tests. Techniques like walk-forward analysis or multiple randomized backtests can provide a broader view of performance across changing market conditions rather than relying on a single historical scenario [5]. Always account for trading costs in your tests, evaluate how sensitive the strategy is to parameter changes, and use a sensible data split - often 70% in-sample and 30% out-of-sample, supported by 5 to 20 years of historical data [2].

Even when a strategy passes out-of-sample testing, it is still wise to conduct paper trading or forward testing before risking real money [4]. Traders who want to speed up research can use LuxAlgo’s AI Backtesting Assistant to explore strategy ideas and use LuxAlgo Quant when they need to convert validated concepts into Pine Script indicators or strategies for TradingView. The goal is not to produce flawless backtests, but to build a resilient process that stands a better chance of surviving real markets.

FAQs

How can I prevent overfitting during in-sample testing?

To reduce overfitting during in-sample testing, keep your strategy as simple as possible. Focus on the most meaningful indicators and avoid loading the model with too many adjustable parameters. The more knobs you keep turning, the easier it becomes to fit random noise instead of real market behavior.

Another useful step is to reserve a validation set that remains untouched during optimization. After refining the strategy on your main in-sample data, test it on that reserved segment. If performance drops sharply, that is a strong sign that the model may be too tailored to the training data. For more robust evaluation, traders often use walk-forward or rolling-window analysis to test performance across multiple segments.

Finally, stress test the strategy under realistic conditions. Include slippage, transaction costs, spread variation, and imperfect execution assumptions. If you are coding the strategy yourself, LuxAlgo Quant can help generate or validate Pine Script logic before you move on to broader backtesting and forward testing.

Why should I use both in-sample and out-of-sample testing when developing a trading strategy?

Using both in-sample and out-of-sample testing improves the odds that your strategy is useful beyond a single historical dataset. In-sample testing helps you build and refine the model, while out-of-sample testing checks how it behaves on fresh, unseen data.

This two-step process helps reveal whether the strategy can handle different market conditions instead of just reflecting the quirks of a particular sample. It reduces the chance of overfitting and gives you more confidence that the backtest is measuring something real rather than accidental. Combined with forward testing, it creates a much more dependable research process.

What steps should I take if my trading strategy fails out-of-sample testing?

If your strategy fails out-of-sample testing, step back and review its rules for signs of overfitting. Look for excessive complexity, overly narrow filters, or parameters that only make sense for one historical environment. Simplify where possible instead of endlessly adding new conditions.

Once you have improved the logic, test it again on a fresh out-of-sample segment or use walk-forward analysis to see how it performs across different periods. During re-testing, include real-world trading costs such as spreads, commissions, and slippage, and confirm that the data itself is accurate and clean. If the strategy idea needs to be rebuilt or translated into code for TradingView, Quant can also help streamline that iteration process.

References

LuxAlgo Resources

- LuxAlgo AI Backtesting Assistant

- AI Backtesting Assistant Documentation

- LuxAlgo Quant

- Quant Documentation

External Resources

- In-Sample and Out-of-Sample Testing - Alvarez Quant Trading

- In-Sample and Out-of-Sample Testing - The Robust Trader

- Out of Sample Testing - Build Alpha

- Build Alpha

- The Robust Trader

- Backtesting in Trading: Definition, Benefits, and Limitations - Investopedia

- Backtesting and Forward Testing: The Importance of Correlation - Investopedia

- Overfitting - Investopedia

- Paper Trade - Investopedia

- Backtesting with Market Data - Portfolio Optimization Book

- Probability of Backtest Overfitting - SSRN

- Out-of-Sample Testing - Quantified Strategies

- Quantpedia

- Quantpedia Video: In-Sample vs. Out-of-Sample Analysis of Trading Strategies